A couple of weeks ago I set myself a task of developing a mobile application using HTML5 that could be deployed onto as many popular mobile platforms as possible with the minimum amount of modification. This post describes that task and conclusions drawn from doing it.

Firstly I should describe what I mean by mobile application since HTML5 is typically used via a browser and a URL. In this case I mean a local application that can be bundled and deployed a from an App Store/Marketplace etc. and looks and feels the same as a local application. Recently there has been a lot of interest in using HTML5 to develop mobile applications in this way so I wanted to test the water.

Lots of options

A quick google search on the subject will find a lot of different technologies and frameworks that claim to provide just what you need to attempt what I was attempting. I set myself some basic design principles and set about verifying as many platforms as I could find. I am sure there are ones which I missed but what follows is the cast that I played with, all of them do what they say they can but there are tradeoffs and to be honest your choice will depend on the tradeoffs you want to make. So set yourself some guidelines. Mine where quite simple. Firstly I wanted to be able to have a lot of flexibility and as such wanted good access to HTML5/CSS and javascript and not be forced to live in one or the other. Whatever I chose had to support mobile device features such as touch, rotation, different viewport dimensions and access to device sensors. I also didn’t want to learn a complex new object model in order to develop my app so reuse of existing approaches was good thing. And finally I didn’t want to pay licensing.

I tested a number of multi-platform technologies such as

Jo,

Sencha,

Titanium, and

JQTouch as well a one (Enyo) that wasn’t billed as multi-platform, see my previous post for details. Firstly they all worked to a certain extent but for one reason (e.g lack of touch support) or another (e.g complex javascript framework) I ruled them out. I finally landed on

JQuery Mobile. Although in beta and not as polished as something like Sencha touch it was the best option in that is satisfied all my criteria for a framework. As a framework it embraces HTML5 and CSS (rather than encapsulating it) while providing a powerful javascript framework that provides a lot of nice

features for a mobile developer. Finally as it’s names suggests it is built-on and uses

jQuery which means that a lot of current web developers will be able to easily take advantage of its features without skipping a beat.

Going Cross Platform

The application framework provides the basis for creating the look and feel of an HTML5 mobile application but they don’t help with creating cross platform applications such as I was interested in. They are fine for web based applications accessed via a browser but not a native app deployed and launched like a native app. Luckily the bit that is missing can be satisfied by a great piece of technology called

Phone Gap. Phone Gap not only provides an API to access many of

common sensors built into mobile platforms (e.g location, accelerometers etc.) it also provides the application shell support which enables you to

repurpose your HTML5 look and feel into different mobile environments without (for the most part) changing a line of code. There might be other technologies that do the same but to be honest I stopped looking once I played with Phone Gap they have a fairly complete package which is well documented and integrated with the XCode, Eclipse and Titanium Aptana IDE which became the environments I used the most during this task.

The process

So with my choice of application environment sorted now it came down to the task of choosing my app to build. I had decided that the actual app didn’t matter since it was the journey rather than the destination which was important. Once I had achieved what I set out to do, repeating it for other applications would be easier. So I actually found a

JQuery Mobile Tutorial on how to build a feed reader. This tutorial demonstrates how to write a web service which has a UI generated by php files into HTML/CSS/JS pages which are loaded by the browser. All the page generation is done on the server and as such this tutorial created a web application in the traditional browser based model, however it is a good tutorial and it allowed me to both learn JQuery Mobile and provide a basis for my local application experiment.

I re-created the application to work without a service component using JQuery Mobile to generate the UI based on user events. The whole experience was packed into a single HTML file (

thanks to the multi-page concept in JQM) and a single javascript file to drive the application logic. I then used PhoneGap to to provide the shell for applications which could used on devices. My first test case was to provide the feed reader app for both Android and IPhone, and I later expanded to WebOS. Using Phone Gap it would be a simple matter to create an application for all the platforms PhoneGap supports as long as that platform supports HTML5.

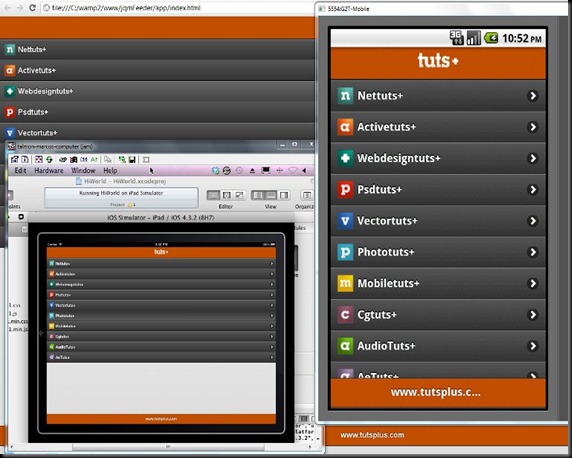

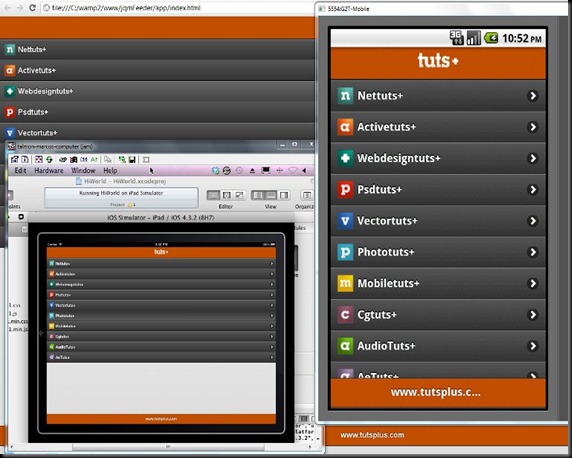

The image above shows the same app running as a local app on three different platforms. On the right it is running in the Android emulator, bottom left it is running on the IPad emulator under XCode and finally in the background it is running in Chrome on the desktop (more on this later). This is the same HTML/CSS/JS code running on all platforms. The code is simply slotted into the App shell created by Phone Gap.

Conclusions

So I can claim success right? well, not exactly because although it is possible to do what I set out to do there are a few things that need to be improved before I feel this kind of thing is ready for prime time.

First in line is a decent development environment. I used a combination of Titanium Aptana and the Chrome browser. Aptana managed the source code while testing and debugging was done in Chrome (which is why I show it above). At the moment both are needed. This wasn’t ideal but it was possible however, not as seamless as you might like. Getting tighter integration between the development process and debugging process would make life a lot easier all around.

One area that really caught me out is catching errors in generated code (e.g. HTML5 or CSS). Inserting code into the DOM is almost inevitable doing this kind of activity and it can be (was for me) the source of a lot of the problems. Although Aptana does basic syntax checking and code completion you need to use validators (

W3C HTML5 Validator and

JSLint in my case) to make sure you have clean code. Unfortunately the validators work off static code if there is an error in any of the dynamic code (a closing H2 tag in my case) there is no way to pick it up other than using the DOM browser (in chrome) and wading though looking for errors. This is very time consuming. So a validator that works off generated code would be great.

The second problem I had to deal with is that although the same code can run on all platforms and Phone Gap can be used to hide a lot platform differences there are still accommodations that need to be taken into account. One of the big ones that I had to deal with was the lack of a back-button on IOS, requiring me to generate a soft button when the app was running on IOS. This isn’t hard as phone gap provides an API to discover the platform but it is something to be aware of and requires testing

Having said the above, I am encouraged that this is actually possible it really opens up the whole mobile a little more and although there are going to be certain apps where pure native code is required there are an awful lot that can be coded in the same way as I have done. One comment I always get asked with respect to using HTML5 is performance, all I can say in this case is that when I deployed the feed reader app onto my device (a G2) it performed very well. The app loaded fast (much faster than the network version) and was responsive to user actions. There was a delay when retrieving feeds and feed entries but those were network delays and could be prevented by using

caching and

prefetch. As it is Jquery Mobile already manages a lot of that kind of thing.

The advantage of creating a local HTML5 app versus a traditional service based application is that other areas of HTML5 can be exploited perhaps the most important one from a mobile perspective is dealing with offline operation. Having the application local also cuts down on network access allowing for minimal amounts of information to be passed between a service and an app. These are areas I plan to deal with in subsequent posts.

For those interested you can get the source code from

http://dl.dropbox.com/u/1874766/jqmFeeder.zip so you can play in your own pen . As to what is next, now I am interested in how having HTML5 on the client can and should effect the design of mobile applications I am eager look at both offline operation and the use of websockets to potentially reduce the battery impact of traditional polling apps. More on this later